ROS2 packages for using Intel RealSense D400 cameras.

Latest release notes

Intel RealSense ROS1 Wrapper

Intel Realsense ROS1 Wrapper is not supported anymore, since our developers team are focusing on ROS2 distro.For ROS1 wrapper, go to ros1-legacy branch

Moving from ros2-legacy to ros2-development

- Changed Parameters:

- "stereo_module", "l500_depth_sensor" are replaced by "depth_module"

- For video streams: <module>.profile replaces <stream>_width, <stream>_height, <stream>_fps

- ROS2-legacy (Old):

- ros2 launch realsense2_camera rs_launch.py depth_width:=640 depth_height:=480 depth_fps:=30.0 infra1_width:=640 infra1_height:=480 infra1_fps:=30.0

- ROS2-development (New):

- ros2 launch realsense2_camera rs_launch.py depth_module.profile:=640x480x30

- ROS2-legacy (Old):

- Removed paramets <stream>_frame_id, <stream>_optical_frame_id. frame_ids are now defined by camera_name

- "filters" is removed. All filters (or post-processing blocks) are enabled/disabled using "<filter>.enable"

- "align_depth" is now a regular processing block and as such the parameter for enabling it is replaced with "align_depth.enable"

- "allow_no_texture_points", "ordered_pc" are now belong to the pointcloud filter and as such are replaced by "pointcloud.allow_no_texture_points", "pointcloud.ordered_pc"

- "pointcloud_texture_stream", "pointcloud_texture_index" belong now to the pointcloud filter and were renamed to match their librealsense' names: "pointcloud.stream_filter", "pointcloud.stream_index_filter"

- Allow enable/disable of sensors in runtime (parameters <stream>.enable)

- Allow enable/disable of filters in runtime (parameters <filter_name>.enable)

- unite_imu_method parameter is now changeable in runtime.

- enable_sync parameter is now changeable in runtime.

Step 1: Install the ROS2 distribution

-

- ROS2 Foxy

- ROS2 Galactic (deprecated)

Step 2: Install latest Intel® RealSense™ SDK 2.0

-

- Jetson users - use the Jetson Installation Guide

- Otherwise, install from Linux Debian Installation Guide

- In this case treat yourself as a developer: make sure to follow the instructions to also install librealsense2-dev and librealsense2-dkms packages

-

Option 2: Install librealsense2 (without graphical tools and examples) debian package from ROS servers:

- Configure your Ubuntu repositories

- Install all realsense ROS packages by

sudo apt install ros-<ROS_DISTRO>-librealsense2*- For example, for Humble distro:

sudo apt install ros-humble-librealsense2*

- For example, for Humble distro:

-

- Download the latest Intel® RealSense™ SDK 2.0

- Follow the instructions under Linux Installation

Step 3: Install Intel® RealSense™ ROS2 wrapper

- Configure your Ubuntu repositories

- Install all realsense ROS packages by

sudo apt install ros-<ROS_DISTRO>-realsense2-* - For example, for Humble distro:

sudo apt install ros-humble-realsense2-*

-

Create a ROS2 workspace

mkdir -p ~/ros2_ws/src cd ~/ros2_ws/src/

-

Clone the latest ROS2 Intel® RealSense™ wrapper from here into '~/ros2_ws/src/'

git clone https://github.com/IntelRealSense/realsense-ros.git -b ros2-development cd ~/ros2_ws -

Install dependencies

sudo apt-get install python3-rosdep -y

sudo rosdep init # "sudo rosdep init --include-eol-distros" for Eloquent and earlier

rosdep update # "sudo rosdep update --include-eol-distros" for Eloquent and earlier

rosdep install -i --from-path src --rosdistro $ROS_DISTRO --skip-keys=librealsense2 -y- Build

colcon build- Source environment

ROS_DISTRO=<YOUR_SYSTEM_ROS_DISTRO> # set your ROS_DISTRO: humble, galactic, foxy

source /opt/ros/$ROS_DISTRO/setup.bash

cd ~/ros2_ws

. install/local_setup.bashros2 run realsense2_camera realsense2_camera_node

# or, with parameters, for example - temporal and spatial filters are enabled:

ros2 run realsense2_camera realsense2_camera_node --ros-args -p enable_color:=false -p spatial_filter.enable:=true -p temporal_filter.enable:=true

ros2 launch realsense2_camera rs_launch.py

ros2 launch realsense2_camera rs_launch.py depth_module.profile:=1280x720x30 pointcloud.enable:=true

- Each sensor has a unique set of parameters.

- Video sensors, such as depth_module or rgb_camera have, at least, the 'profile' parameter.

- The profile parameter is a string of the following format: <width>X<height>X<fps> (The deviding character can be X, x or ",". Spaces are ignored.)

- For example:

depth_module.profile:=640x480x30

- Since infra1, infra2 and depth are all streams of the depth_module, their width, height and fps are defined by their common sensor.

- If the specified combination of parameters is not available by the device, the default configuration will be used.

- For the entire list of parameters type

ros2 param list. - For reading a parameter value use

ros2 param get <node> <parameter_name>- For example:

ros2 param get /camera/camera depth_module.emitter_on_off

- For example:

- For setting a new value for a parameter use

ros2 param set <node> <parameter_name> <value>- For example:

ros2 param set /camera/camera depth_module.emitter_on_off true

- For example:

- All of the filters and sensors inner parameters.

- enable_<stream_name>:

- Choose whether to enable a specified stream or not. Default is true for images and false for orientation streams.

- <stream_name> can be any of infra1, infra2, color, depth, fisheye, fisheye1, fisheye2, gyro, accel, pose.

- For example:

enable_infra1:=true enable_color:=false

- enable_sync:

- gathers closest frames of different sensors, infra red, color and depth, to be sent with the same timetag.

- This happens automatically when such filters as pointcloud are enabled.

- <stream_type>_qos:

- Sets the QoS by which the topic is published.

- <stream_type> can be any of infra, color, fisheye, depth, gyro, accel, pose.

- Available values are the following strings:

SYSTEM_DEFAULT,DEFAULT,PARAMETER_EVENTS,SERVICES_DEFAULT,PARAMETERS,SENSOR_DATA. - For example:

depth_qos:=SENSOR_DATA - Reference: ROS2 QoS profiles formal documentation

- Notice: <stream_type>_info_qos refers to both camera_info topics and metadata topics.

- tf_publish_rate:

- double, rate (in Hz) at which dynamic transforms are published

- Default value is 0.0 Hz (means no dynamic TF)

- This param also depends on publish_tf param

- If publish_tf:=false, then no TFs will be published, even if tf_publish_rate is >0.0 Hz

- If publish_tf:=true and tf_publish_rate set to >0.0 Hz, then dynamic TFs will be published at the specified rate

-

serial_no:

- will attach to the device with the given serial number (serial_no) number.

- Default, attach to the first (in an inner list) RealSense device.

- Note: serial number should be defined with "_" prefix.

- That is a workaround until a better method will be found to ROS2's auto conversion of strings containing only digits into integers.

- Example: serial number 831612073525 can be set in command line as

serial_no:=_831612073525.

-

usb_port_id:

- will attach to the device with the given USB port (usb_port_id).

- For example:

usb_port_id:=4-1orusb_port_id:=4-2 - Default, ignore USB port when choosing a device.

-

device_type:

- will attach to a device whose name includes the given device_type regular expression pattern.

- Default, ignore device type.

- For example:

device_type:=d435will match d435 and d435i.device_type=d435(?!i)will match d435 but not d435i.

-

reconnect_timeout:

- When the driver cannot connect to the device try to reconnect after this timeout (in seconds).

- For Example:

reconnect_timeout:=10

-

wait_for_device_timeout:

- If the specified device is not found, will wait wait_for_device_timeout seconds before exits.

- Defualt, wait_for_device_timeout < 0, will wait indefinitely.

- For example:

wait_for_device_timeout:=60

-

rosbag_filename:

- Publish topics from rosbag file. There are two ways for loading rosbag file:

- Command line -

ros2 run realsense2_camera realsense2_camera_node -p rosbag_filename:="/full/path/to/rosbag.bag" - Launch file - set

rosbag_filenameparameter with rosbag full path (seerealsense2_camera/launch/rs_launch.pyas reference)

-

initial_reset:

- On occasions the device was not closed properly and due to firmware issues needs to reset.

- If set to true, the device will reset prior to usage.

- For example:

initial_reset:=true

-

<stream_name>_frame_id, <stream_name>_optical_frame_id, aligned_depth_to_<stream_name>_frame_id: Specify the different frame_id for the different frames. Especially important when using multiple cameras.

-

base_frame_id: defines the frame_id all static transformations refers to.

-

odom_frame_id: defines the origin coordinate system in ROS convention (X-Forward, Y-Left, Z-Up). pose topic defines the pose relative to that system.

-

unite_imu_method:

- D400 cameras have built in IMU components which produce 2 unrelated streams, each with it's own frequency:

- gyro - which shows angular velocity

- accel which shows linear acceleration.

- By default, 2 corresponding topics are available, each with only the relevant fields of the message sensor_msgs::Imu are filled out.

- Setting unite_imu_method creates a new topic, imu, that replaces the default gyro and accel topics.

- The imu topic is published at the rate of the gyro.

- All the fields of the Imu message under the imu topic are filled out.

unite_imu_methodparameter supported values are [0-2] meaning: [0 -> None, 1 -> Copy, 2 -> Linear_ interpolation] when:- linear_interpolation: Every gyro message is attached by the an accel message interpolated to the gyro's timestamp.

- copy: Every gyro message is attached by the last accel message.

- D400 cameras have built in IMU components which produce 2 unrelated streams, each with it's own frequency:

-

clip_distance:

- Remove from the depth image all values above a given value (meters). Disable by giving negative value (default)

- For example:

clip_distance:=1.5

-

linear_accel_cov, angular_velocity_cov: sets the variance given to the Imu readings.

-

hold_back_imu_for_frames: Images processing takes time. Therefor there is a time gap between the moment the image arrives at the wrapper and the moment the image is published to the ROS environment. During this time, Imu messages keep on arriving and a situation is created where an image with earlier timestamp is published after Imu message with later timestamp. If that is a problem, setting hold_back_imu_for_frames to true will hold the Imu messages back while processing the images and then publish them all in a burst, thus keeping the order of publication as the order of arrival. Note that in either case, the timestamp in each message's header reflects the time of it's origin.

-

publish_tf:

- boolean, enable/disable publishing static and dynamic TFs

- Defaults to True

- So, static TFs will be published by default

- If dynamic TFs are needed, user should set the param tf_publish_rate to >0.0 Hz

- If set to false, both static and dynamic TFs won't be published, even if the param tf_publish_rate is set to >0.0 Hz

-

diagnostics_period:

- double, positive values set the period between diagnostics updates on the

/diagnosticstopic. - 0 or negative values mean no diagnostics topic is published. Defaults to 0.

The/diagnosticstopic includes information regarding the device temperatures and actual frequency of the enabled streams.

- double, positive values set the period between diagnostics updates on the

-

publish_odom_tf: If True (default) publish TF from odom_frame to pose_frame.

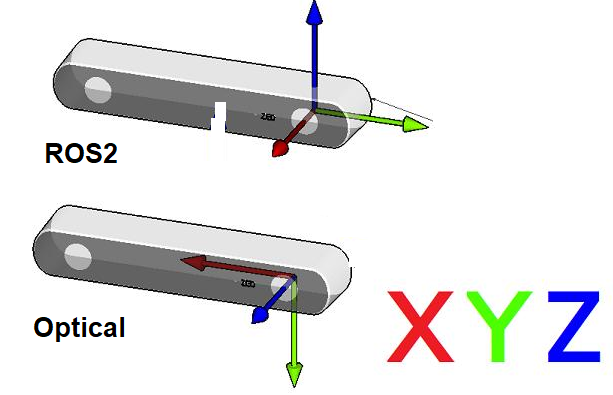

- Point Of View:

- Imagine we are standing behind of the camera, and looking forward.

- Always use this point of view when talking about coordinates, left vs right IRs, position of sensor, etc..

- ROS2 Coordinate System: (X: Forward, Y:Left, Z: Up)

- Camera Optical Coordinate System: (X: Right, Y: Down, Z: Forward)

- References: REP-0103, REP-0105

- All data published in our wrapper topics is optical data taken directly from our camera sensors.

- static and dynamic TF topics publish optical CS and ROS CS to give the user the ability to move from one CS to other CS.

- TF msg expresses a transform from coordinate frame "header.frame_id" (source) to the coordinate frame child_frame_id (destination) Reference

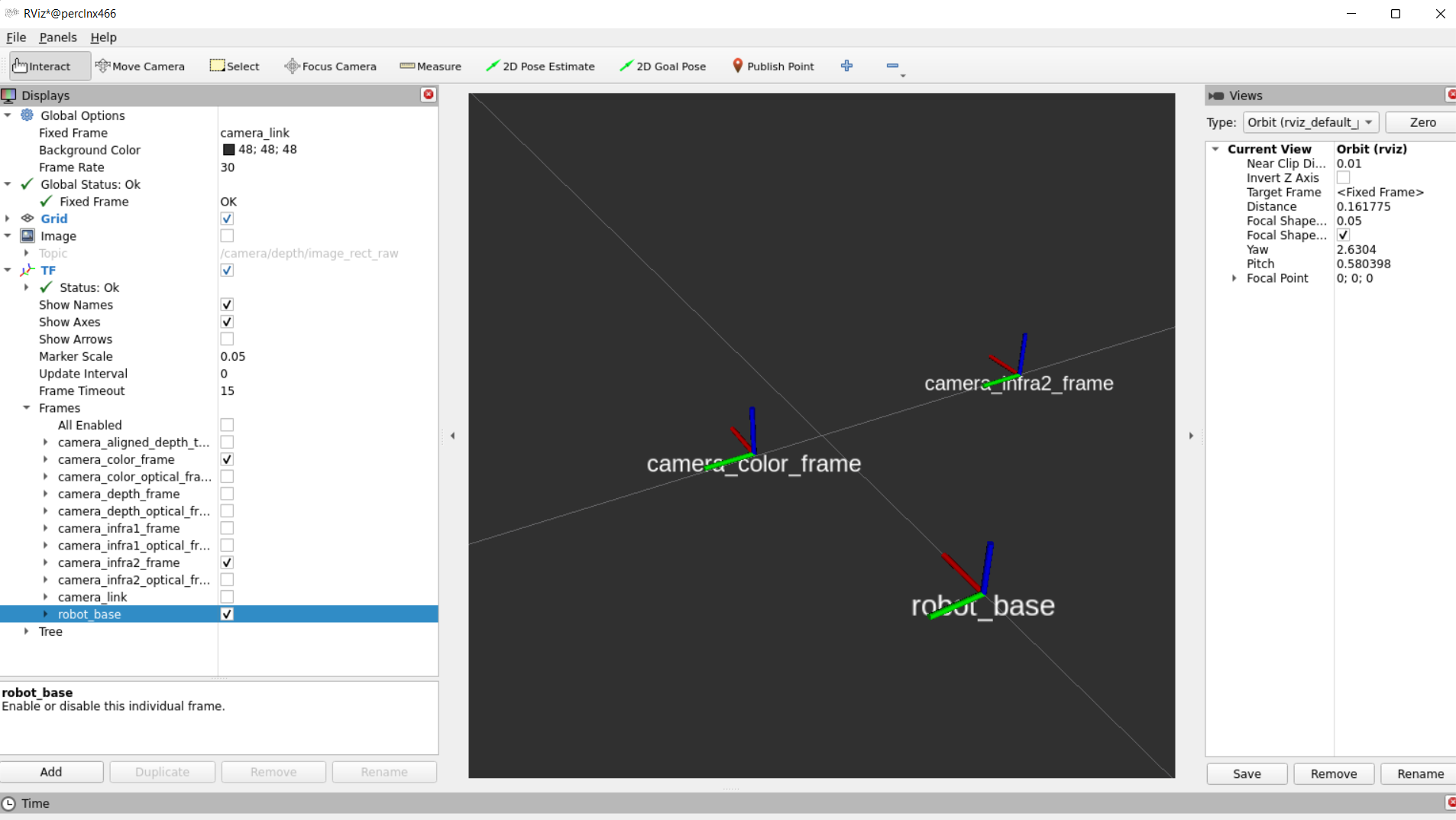

- In RealSense cameras, the origin point (0,0,0) is taken from the left IR (infra1) position and named as "camera_link" frame

- Depth, left IR and "camera_link" coordinates converge together.

- Our wrapper provide static TFs between each sensor coordinate to the camera base (camera_link)

- Also, it provides TFs from each sensor ROS coordinates to its corrosponding optical coordinates.

- Example of static TFs of RGB sensor and Infra2 (right infra) sensor of D435i module as it shown in rviz2:

- Extrinsic from sensor A to sensor B means the position and orientation of sensor A relative to sensor B.

- Imagine that B is the origin (0,0,0), then the Extrensics(A->B) describes where is sensor A relative to sensor B.

- For example, depth_to_color, in D435i:

- If we look from behind of the D435i, extrinsic from depth to color, means, where is the depth in relative to the color.

- If we just look at the X coordinates, in the optical coordiantes (again, from behind) and assume that COLOR(RGB) sensor is (0,0,0), we can say that DEPTH sensor is on the right of RGB by 0.0148m (1.48cm).

administrator@perclnx466 ~/ros2_humble $ ros2 topic echo /camera/extrinsics/depth_to_color

rotation:

- 0.9999583959579468

- 0.008895332925021648

- -0.0020127370953559875

- -0.008895229548215866

- 0.9999604225158691

- 6.045500049367547e-05

- 0.0020131953060626984

- -4.254872692399658e-05

- 0.9999979734420776

translation:

- 0.01485931035131216

- 0.0010161789832636714

- 0.0005317096947692335

---

- Extrinsic msg is made up of two parts:

- float64[9] rotation (Column - major 3x3 rotation matrix)

- float64[3] translation (Three-element translation vector, in meters)

The published topics differ according to the device and parameters.

After running the above command with D435i attached, the following list of topics will be available (This is a partial list. For full one type ros2 topic list):

- /camera/aligned_depth_to_color/camera_info

- /camera/aligned_depth_to_color/image_raw

- /camera/color/camera_info

- /camera/color/image_raw

- /camera/color/metadata

- /camera/depth/camera_info

- /camera/depth/color/points

- /camera/depth/image_rect_raw

- /camera/depth/metadata

- /camera/extrinsics/depth_to_color

- /camera/imu

- /diagnostics

- /parameter_events

- /rosout

- /tf_static

This will stream relevant camera sensors and publish on the appropriate ROS topics.

Enabling accel and gyro is achieved either by adding the following parameters to the command line:

ros2 launch realsense2_camera rs_launch.py pointcloud.enable:=true enable_gyro:=true enable_accel:=true

or in runtime using the following commands:

ros2 param set /camera/camera enable_accel true

ros2 param set /camera/camera enable_gyro true

Enabling stream adds matching topics. For instance, enabling the gyro and accel streams adds the following topics:

- /camera/accel/imu_info

- /camera/accel/metadata

- /camera/accel/sample

- /camera/extrinsics/depth_to_accel

- /camera/extrinsics/depth_to_gyro

- /camera/gyro/imu_info

- /camera/gyro/metadata

- /camera/gyro/sample

The metadata messages store the camera's available metadata in a json format. To learn more, a dedicated script for echoing a metadata topic in runtime is attached. For instance, use the following command to echo the camera/depth/metadata topic:

python3 src/realsense-ros/realsense2_camera/scripts/echo_metadada.py /camera/depth/metadata

The following post processing filters are available:

-

align_depth: If enabled, will publish the depth image aligned to the color image on the topic/camera/aligned_depth_to_color/image_raw.- The pointcloud, if created, will be based on the aligned depth image.

-

colorizer: will color the depth image. On the depth topic an RGB image will be published, instead of the 16bit depth values . -

pointcloud: will add a pointcloud topic/camera/depth/color/points.- The texture of the pointcloud can be modified using the

pointcloud.stream_filterparameter. - The depth FOV and the texture FOV are not similar. By default, pointcloud is limited to the section of depth containing the texture. You can have a full depth to pointcloud, coloring the regions beyond the texture with zeros, by setting

pointcloud.allow_no_texture_pointsto true. - pointcloud is of an unordered format by default. This can be changed by setting

pointcloud.ordered_pcto true.

- The texture of the pointcloud can be modified using the

-

hdr_merge: Allows depth image to be created by merging the information from 2 consecutive frames, taken with different exposure and gain values. -

The way to set exposure and gain values for each sequence in runtime is by first selecting the sequence id, using the

depth_module.sequence_idparameter and then modifying thedepth_module.gain, anddepth_module.exposure. -

To view the effect on the infrared image for each sequence id use the

sequence_id_filter.sequence_idparameter. -

To initialize these parameters in start time use the following parameters:

depth_module.exposure.1depth_module.gain.1depth_module.exposure.2depth_module.gain.2

-

For in-depth review of the subject please read the accompanying white paper.

-

The following filters have detailed descriptions in : https://github.com/IntelRealSense/librealsense/blob/master/doc/post-processing-filters.md

disparity_filter- convert depth to disparity before applying other filters and back.spatial_filter- filter the depth image spatially.temporal_filter- filter the depth image temporally.hole_filling_filter- apply hole-filling filter.decimation_filter- reduces depth scene complexity.

Each of the above filters have it's own parameters, following the naming convention of <filter_name>.<parameter_name> including a <filter_name>.enable parameter to enable/disable it.

- device_info : retrieve information about the device - serial_number, firmware_version etc. Type

ros2 interface show realsense2_camera_msgs/srv/DeviceInfofor the full list. Call example:ros2 service call /camera/device_info realsense2_camera_msgs/srv/DeviceInfo

Our ROS2 Wrapper node supports zero-copy communications if loaded in the same process as a subscriber node. This can reduce copy times on image/pointcloud topics, especially with big frame resolutions and high FPS.

You will need to launch a component container and launch our node as a component together with other component nodes. Further details on "Composing multiple nodes in a single process" can be found here.

Further details on efficient intra-process communication can be found here.

-

Start the component:

ros2 run rclcpp_components component_container

-

Add the wrapper:

ros2 component load /ComponentManager realsense2_camera realsense2_camera::RealSenseNodeFactory -e use_intra_process_comms:=true

Load other component nodes (consumers of the wrapper topics) in the same way.

- Node components are currently not supported on RCLPY

- Compressed images using

image_transportwill be disabled as this isn't supported with intra-process communication

For getting a sense of the latency reduction, a frame latency reporter tool is available via a launch file.

The launch file loads the wrapper and a frame latency reporter tool component into a single container (so the same process).

The tool prints out the frame latency (now - frame.timestamp) per frame.

The tool is not built unless asked for. Turn on BUILD_TOOLS during build to have it available:

colcon build --cmake-args '-DBUILD_TOOLS=ON'The launch file accepts a parameter, intra_process_comms, controlling whether zero-copy is turned on or not. Default is on:

ros2 launch realsense2_camera rs_intra_process_demo_launch.py intra_process_comms:=true