-

Notifications

You must be signed in to change notification settings - Fork 5.4k

Using a TensorFlow Pretrained Model to Detect Everyday Objects

Teams have the option of using a custom TensorFlow object detection model to detect objects other than the current season's game elements. This tutorial demonstrates how use a pretrained model to look for and track everyday objects. This particular tutorial uses the OnBot Java programming tool, but teams can also use Android Studio or the Blocks programming tool to implement this example. This tutorial covers an advanced topic and assumes that the user has good programming knowledge and is familiar with Android devices and computer technology.

TensorFlow can be used to recognize everyday objects like a keyboard, a clock, or a cellphone.

This tutorial also assumes that you have already completed the steps in a previous TensorFlow tutorial. If you have not yet completed the steps in the previous TensorFlow tutorial, then please do so before continuing with this tutorial.

The custom inference model must be in the form of a TensorFlow Lite (.tflite) file. For this example, we will use the same object detection model that is used in Google's example TensorFlow Object Detection Android app.

The model and its corresponding label map can be downloaded from this link.

The .zip archive contains a file called "detect.tflite". This TensorFlow Lite file is the inference model that TensorFlow will use to recognize the everyday objects. It is based on the MobileNet neural network architecture, which was designed to provide low latency recognitions, while still maintaining reasonable recognition accuracy.

The .zip archive also contains a text file called "labelmap.txt". This text file contains a list of labels that correspond to the known objects in the "detect.tflite" model file.

Download the .zip archive to your laptop and uncompress its contents to a folder.

For this example, we want to transfer the .tflite and labelmap files to a directory on your robot controller.

If you are an advanced user and are familiar with using adb, then you can use adb to push the files to the directory "/sdcard/FIRST/tflitemodels" on your robot controller.

If you do not know how to use the Android Debug Bridge tool, then you can use the File Explorer on a Windows laptop to copy and paste the files to this directory.

If you are using an Android phone as your robot controller, then connect the phone to your laptop using a USB cable. Swipe down from the top of the phone's screen to display the menu. Look for an item that indicates the USB mode that the phone is currently in. By default, most phones will be in charging mode.

Tap on the "Android System" item in the menu to expand the item and to get additional details on the current USB mode.

Tap on the Android System item to expand it.

Tap where it says "Tap for more options" to display a screen that will allow you to switch the phone into file transfer mode.

Tap to display the USB mode options.

Select the "Transfer files" mode and the phone should now appear as a browsable storage device in your computer's Windows Explorer.

Select the "Transfer files" option.

If you are using a REV Robotics Control Hub, you can connect the Control Hub (that is powered on by a fully charged 12V battery) to your laptop using a USB Type C cable and the Control Hub will automatically appear as a browsable storage device in your computer's Windows Explorer. You do not need to switch it to "Transfer file" mode since it is automatically always in this mode.

Use your Windows Explorer to locate and copy the "detect.tflite" and "labelmap.txt" files.

Copy the .tflite and .txt files.

Use Windows Explorer to browse the internal shared storage of your Android device and navigate to the FIRST->tflitemodels directory. Paste the "detect.tflite" and "labelmap.txt" files to this directory.

Navigate to FIRST->tflitemodels and paste the two files in this directory.

Now the files are where we want them to be for this example.

Use the OnBot Java editor to create a new op mode that is called "TFODEverydayObjects" and select "ConceptTensorFlowObjectDetection" as the sample op mode that will serve as the template for your new op mode. Note that a copy of the full op mode that was used for this example (except for the Vuforia key) is included at the end of this tutorial.

Your op mode will need the following additional import statements:

import com.qualcomm.robotcore.util.RobotLog;

import java.io.BufferedReader;

import java.io.FileReader;

import java.util.ArrayList;

Modify the annotations to change the name to avoid a "collision" with any other op modes on your robot controller that are based on the same sample op mode. Also comment at the @Disabled annotation to enable this op mode.

@TeleOp(name = "TFOD Everyday Objects", group = "Concept")

//@Disabled

public class TFODEverydayObjects extends LinearOpMode {

Modify the sample op mode so that you specify the paths to your model file ("detect.tflite") and to your label map file ("labelmap.txt").

public class TFODEverydayObjects extends LinearOpMode {

private static final String TFOD_MODEL_FILE = "/sdcard/FIRST/tflitemodels/detect.tflite";

private static final String TFOD_MODEL_LABELS = "/sdcard/FIRST/tflitemodels/labelmap.txt";

private String[] labels;

Before you can run your op mode, you must first make sure you have a valid Vuforia developer license key to initialize the Vuforia software. You can obtain a key for free from https://developer.vuforia.com/license-manager. Once you obtain your key, replace the VUFORIA_KEY static String with the actual license key so the Vuforia software will be able to initialize properly.

private static final String VUFORIA_KEY =

" -- YOUR NEW VUFORIA KEY GOES HERE --- ";

Create a method called readLabels() that will be used to read the label map file from the tflitemodels subdirectory:

/**

* Read the labels for the object detection model from a file.

*/

private void readLabels() {

ArrayList<String> labelList = new ArrayList<>();

// try to read in the the labels.

try (BufferedReader br = new BufferedReader(new FileReader(TFOD_MODEL_LABELS))) {

int index = 0;

while (br.ready()) {

// skip the first row of the labelmap.txt file.

// if you look at the TFOD Android example project (https://github.com/tensorflow/examples/tree/master/lite/examples/object_detection/android)

// you will see that the labels for the inference model are actually extracted (as metadata) from the .tflite model file

// instead of from the labelmap.txt file. if you build and run that example project, you'll see that

// the label list begins with the label "person" and does not include the first line of the labelmap.txt file ("???").

// i suspect that the first line of the labelmap.txt file might be reserved for some future metadata schema

// (or that the generated label map file is incorrect).

// for now, skip the first line of the label map text file so that your label list is in sync with the embedded label list in the .tflite model.

if(index == 0) {

// skip first line.

br.readLine();

} else {

labelList.add(br.readLine());

}

index++;

}

} catch (Exception e) {

telemetry.addData("Exception", e.getLocalizedMessage());

telemetry.update();

}

if (labelList.size() > 0) {

labels = getStringArray(labelList);

RobotLog.vv("readLabels()", "%d labels read.", labels.length);

for (String label : labels) {

RobotLog.vv("readLabels()", " " + label);

}

} else {

RobotLog.vv("readLabels()", "No labels read!");

}

}

Important note: The readLabels() method actually skips the first line of the "labelmap.txt" file. If you review Google's example TensorFlow Object Detection Android app carefully you will notice that the app actually extracts the label map as metadata from the .tflite file. If you build and run the app, you will see that when the the app extracts the labels from the .tflite file's metadata, the first label is "person". In order to ensure that your labels are in sync with the known objects of the sample .tflite model, the readLabels() method skips the first line of the label map file and starts with the second label ("person"). I suspect that the first line of the label map file might be reserved for future use (or it might be an error in the file).

You will also need to define the getStringArray() method which the readLabels() method uses to convert an ArrayList to a String array.

// Function to convert ArrayList<String> to String[]

private String[] getStringArray(ArrayList<String> arr)

{

// declaration and initialize String Array

String str[] = new String[arr.size()];

// Convert ArrayList to object array

Object[] objArr = arr.toArray();

// Iterating and converting to String

int i = 0;

for (Object obj : objArr) {

str[i++] = (String)obj;

}

return str;

}

Call the readLabels() method to read the label map and generate the labels list. This list will be needed when TensorFlow attempts to load the custom model file.

public void runOpMode() {

// read the label map text files.

readLabels();

// The TFObjectDetector uses the camera frames from the VuforiaLocalizer, so we create that

// first.

initVuforia();

initTfod();

You can set the minimum result confidence level to a relatively lower value so that TensorFlow will identify a greater number of objects when you test your op mode. I tested my op mode with a value of 0.6.

tfodParameters.minResultConfidence = 0.6f;

tfodParameters.isModelTensorFlow2 = false;

Modify the initTfod() method to load the inference model from a file (rather than as an app asset). Include the array of labels that you generated from the label map when you load the custom model file.

if (labels != null) {

tfod.loadModelFromFile(TFOD_MODEL_FILE, labels);

}

The initTFod() method should now look like this:

/**

* Initialize the TensorFlow Object Detection engine.

*/

private void initTfod() {

int tfodMonitorViewId = hardwareMap.appContext.getResources().getIdentifier(

"tfodMonitorViewId", "id", hardwareMap.appContext.getPackageName());

TFObjectDetector.Parameters tfodParameters = new TFObjectDetector.Parameters(tfodMonitorViewId);

tfodParameters.minResultConfidence = 0.6f;

tfodParameters.isModelTensorFlow2 = false;

tfodParameters.inputSize = 300;

tfod = ClassFactory.getInstance().createTFObjectDetector(tfodParameters, vuforia);

if (labels != null) {

tfod.loadModelFromFile(TFOD_MODEL_FILE, labels);

}

}

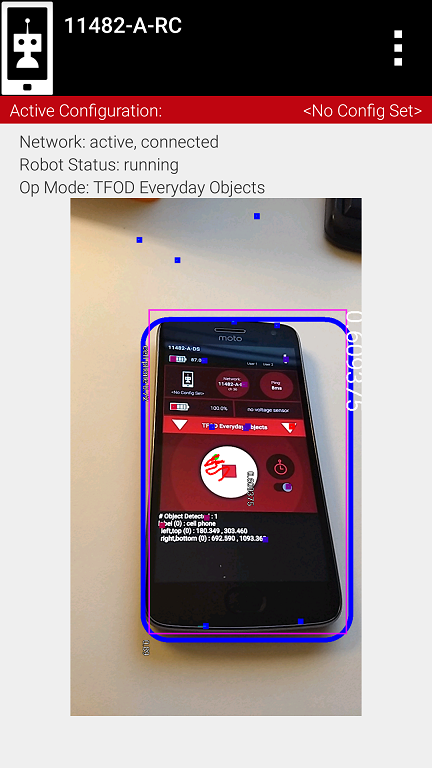

Once you have made these changes to the sample op mode, rebuild the OnBot Java op modes and run the op mode to test it. The robot controller should now be able to detect everyday objects such as a cell phone, a teddy bear, a clock, a computer mouse, and a keyboard. The op mode will draw boundary boxes around recognized objects on the preview screen of the robot controller. You can get a full list of the objects that the model was trained to recognize by looking at the contents of the label map text file.

TensorFlow will recognize everyday objects like a cell phone.

The op mode will also display label information on the driver station (using telemetry) for the objects that it recognizes in its field of view.

The op mode will display label information for recognized objects on the driver station using telemetry.

You can also use Android Studio to build and deploy this example op mode if you prefer. The same op mode you would use for OnBot Java can be built using Android Studio.

You can also use this MobileNet TensorFlow model to detect common objects using a Blocks op mode. However, currently the Blocks development tool does not have support for reading in labels from the label map file. Instead, a Blocks programmer must specify the labels in a list using the "make list from text" programming block.

A Blocks programmer would have to manually enter in the label values using the "make list from text" block.

The example op mode (except for the Vuforia license key) is included below for reference:

/* Copyright (c) 2019 FIRST. All rights reserved.

*

* Redistribution and use in source and binary forms, with or without modification,

* are permitted (subject to the limitations in the disclaimer below) provided that

* the following conditions are met:

*

* Redistributions of source code must retain the above copyright notice, this list

* of conditions and the following disclaimer.

*

* Redistributions in binary form must reproduce the above copyright notice, this

* list of conditions and the following disclaimer in the documentation and/or

* other materials provided with the distribution.

*

* Neither the name of FIRST nor the names of its contributors may be used to endorse or

* promote products derived from this software without specific prior written permission.

*

* NO EXPRESS OR IMPLIED LICENSES TO ANY PARTY'S PATENT RIGHTS ARE GRANTED BY THIS

* LICENSE. THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS

* "AS IS" AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO,

* THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE

* ARE DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT OWNER OR CONTRIBUTORS BE LIABLE

* FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL

* DAMAGES (INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR

* SERVICES; LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER

* CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY,

* OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE

* OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

*/

package org.firstinspires.ftc.teamcode;

import com.qualcomm.robotcore.eventloop.opmode.Disabled;

import com.qualcomm.robotcore.eventloop.opmode.LinearOpMode;

import com.qualcomm.robotcore.eventloop.opmode.TeleOp;

import com.qualcomm.robotcore.util.RobotLog;

import java.io.BufferedReader;

import java.io.FileReader;

import java.util.ArrayList;

import java.util.List;

import org.firstinspires.ftc.robotcore.external.ClassFactory;

import org.firstinspires.ftc.robotcore.external.navigation.VuforiaLocalizer;

import org.firstinspires.ftc.robotcore.external.navigation.VuforiaLocalizer.CameraDirection;

import org.firstinspires.ftc.robotcore.external.tfod.TFObjectDetector;

import org.firstinspires.ftc.robotcore.external.tfod.Recognition;

/**

* This 2022-2023 OpMode illustrates the basics of using the TensorFlow Object Detection API to

* determine which image is being presented to the robot.

*

* Use Android Studio to Copy this Class, and Paste it into your team's code folder with a new name.

* Remove or comment out the @Disabled line to add this OpMode to the Driver Station OpMode list.

*

* IMPORTANT: In order to use this OpMode, you need to obtain your own Vuforia license key as

* is explained below.

*/

@TeleOp(name = "TFOD Everyday Objects", group = "Concept")

//@Disabled

public class TFODEverydayObjects extends LinearOpMode {

private static final String TFOD_MODEL_FILE = "/sdcard/FIRST/tflitemodels/detect.tflite";

private static final String TFOD_MODEL_LABELS = "/sdcard/FIRST/tflitemodels/labelmap.txt";

private String[] labels;

/*

* IMPORTANT: You need to obtain your own license key to use Vuforia. The string below with which

* 'parameters.vuforiaLicenseKey' is initialized is for illustration only, and will not function.

* A Vuforia 'Development' license key, can be obtained free of charge from the Vuforia developer

* web site at https://developer.vuforia.com/license-manager.

*

* Vuforia license keys are always 380 characters long, and look as if they contain mostly

* random data. As an example, here is a example of a fragment of a valid key:

* ... yIgIzTqZ4mWjk9wd3cZO9T1axEqzuhxoGlfOOI2dRzKS4T0hQ8kT ...

* Once you've obtained a license key, copy the string from the Vuforia web site

* and paste it in to your code on the next line, between the double quotes.

*/

private static final String VUFORIA_KEY =

" -- YOUR NEW VUFORIA KEY GOES HERE --- ";

/**

* {@link #vuforia} is the variable we will use to store our instance of the Vuforia

* localization engine.

*/

private VuforiaLocalizer vuforia;

/**

* {@link #tfod} is the variable we will use to store our instance of the TensorFlow Object

* Detection engine.

*/

private TFObjectDetector tfod;

@Override

public void runOpMode() {

// read the label map text files.

readLabels();

// The TFObjectDetector uses the camera frames from the VuforiaLocalizer, so we create that

// first.

initVuforia();

initTfod();

/**

* Activate TensorFlow Object Detection before we wait for the start command.

* Do it here so that the Camera Stream window will have the TensorFlow annotations visible.

**/

if (tfod != null) {

tfod.activate();

// The TensorFlow software will scale the input images from the camera to a lower resolution.

// This can result in lower detection accuracy at longer distances (> 55cm or 22").

// If your target is at distance greater than 50 cm (20") you can increase the magnification value

// to artificially zoom in to the center of image. For best results, the "aspectRatio" argument

// should be set to the value of the images used to create the TensorFlow Object Detection model

// (typically 16/9).

tfod.setZoom(1.0, 16.0/9.0);

}

/** Wait for the game to begin */

telemetry.addData(">", "Press Play to start op mode");

telemetry.update();

waitForStart();

if (opModeIsActive()) {

while (opModeIsActive()) {

if (tfod != null) {

// getUpdatedRecognitions() will return null if no new information is available since

// the last time that call was made.

List<Recognition> updatedRecognitions = tfod.getUpdatedRecognitions();

if (updatedRecognitions != null) {

telemetry.addData("# Objects Detected", updatedRecognitions.size());

// step through the list of recognitions and display image position/size information for each one

// Note: "Image number" refers to the randomized image orientation/number

for (Recognition recognition : updatedRecognitions) {

double col = (recognition.getLeft() + recognition.getRight()) / 2 ;

double row = (recognition.getTop() + recognition.getBottom()) / 2 ;

double width = Math.abs(recognition.getRight() - recognition.getLeft()) ;

double height = Math.abs(recognition.getTop() - recognition.getBottom()) ;

telemetry.addData(""," ");

telemetry.addData("Image", "%s (%.0f %% Conf.)", recognition.getLabel(), recognition.getConfidence() * 100 );

telemetry.addData("- Position (Row/Col)","%.0f / %.0f", row, col);

telemetry.addData("- Size (Width/Height)","%.0f / %.0f", width, height);

}

telemetry.update();

}

}

}

}

}

/**

* Initialize the Vuforia localization engine.

*/

private void initVuforia() {

/*

* Configure Vuforia by creating a Parameter object, and passing it to the Vuforia engine.

*/

VuforiaLocalizer.Parameters parameters = new VuforiaLocalizer.Parameters();

parameters.vuforiaLicenseKey = VUFORIA_KEY;

parameters.cameraDirection = CameraDirection.BACK;

// Instantiate the Vuforia engine

vuforia = ClassFactory.getInstance().createVuforia(parameters);

}

/**

* Initialize the TensorFlow Object Detection engine.

*/

private void initTfod() {

int tfodMonitorViewId = hardwareMap.appContext.getResources().getIdentifier(

"tfodMonitorViewId", "id", hardwareMap.appContext.getPackageName());

TFObjectDetector.Parameters tfodParameters = new TFObjectDetector.Parameters(tfodMonitorViewId);

tfodParameters.minResultConfidence = 0.6f;

tfodParameters.isModelTensorFlow2 = false;

tfodParameters.inputSize = 300;

tfod = ClassFactory.getInstance().createTFObjectDetector(tfodParameters, vuforia);

if (labels != null) {

tfod.loadModelFromFile(TFOD_MODEL_FILE, labels);

}

}

/**

* Read the labels for the object detection model from a file.

*/

private void readLabels() {

ArrayList<String> labelList = new ArrayList<>();

// try to read in the the labels.

try (BufferedReader br = new BufferedReader(new FileReader(TFOD_MODEL_LABELS))) {

int index = 0;

while (br.ready()) {

// skip the first row of the labelmap.txt file.

// if you look at the TFOD Android example project (https://github.com/tensorflow/examples/tree/master/lite/examples/object_detection/android)

// you will see that the labels for the inference model are actually extracted (as metadata) from the .tflite model file

// instead of from the labelmap.txt file. if you build and run that example project, you'll see that

// the label list begins with the label "person" and does not include the first line of the labelmap.txt file ("???").

// i suspect that the first line of the labelmap.txt file might be reserved for some future metadata schema

// (or that the generated label map file is incorrect).

// for now, skip the first line of the label map text file so that your label list is in sync with the embedded label list in the .tflite model.

if(index == 0) {

// skip first line.

br.readLine();

} else {

labelList.add(br.readLine());

}

index++;

}

} catch (Exception e) {

telemetry.addData("Exception", e.getLocalizedMessage());

telemetry.update();

}

if (labelList.size() > 0) {

labels = getStringArray(labelList);

RobotLog.vv("readLabels()", "%d labels read.", labels.length);

for (String label : labels) {

RobotLog.vv("readLabels()", " " + label);

}

} else {

RobotLog.vv("readLabels()", "No labels read!");

}

}

// Function to convert ArrayList<String> to String[]

private String[] getStringArray(ArrayList<String> arr)

{

// declaration and initialize String Array

String str[] = new String[arr.size()];

// Convert ArrayList to object array

Object[] objArr = arr.toArray();

// Iterating and converting to String

int i = 0;

for (Object obj : objArr) {

str[i++] = (String)obj;

}

return str;

}

}

-

TensorFlow 2023-2024